I would not be concerned by Case 1 too much, although an observation numbered as 38 looks a little off. What do you think? Of course, they wouldn’t be a perfect straight line, and this will be your call. Do residuals follow a straight line well or do they deviate severely? It’s good if residuals are lined well on the straight dashed line.

This plot shows if residuals are normally distributed. What do you think? Do you see differences between the two cases? I don’t see any distinctive pattern in Case 1, but I see a parabola in Case 2, where the non-linear relationship was not explained by the model and was left out in the residuals. The good model data are simulated in a way that meets the regression assumptions very well, while the bad model data are not. Let’s look at residual plots from a ‘good’ model and a ‘bad’ model. If you find equally spread residuals around a horizontal line without distinct patterns, that is a good indication you don’t have non-linear relationships. There could be a non-linear relationship between predictor variables and an outcome variable, and the pattern could show up in this plot if the model doesn’t capture the non-linear relationship. This plot shows if residuals have non-linear patterns. Let’s take a look at the first type of plot: 1. The diagnostic plots show residuals in four different ways. They are extreme values based on each criterion and are identified by their row numbers in the data set. You will often see numbers next to some points in each plot. Par(mfrow=c(2,2)) # Change the panel layout to 2 x 2

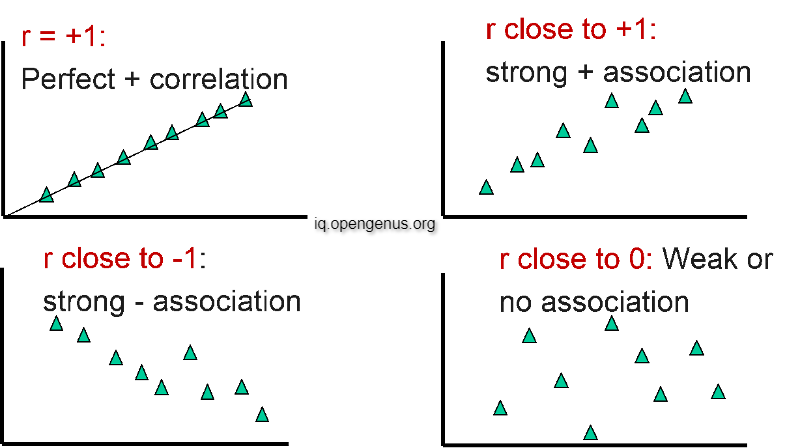

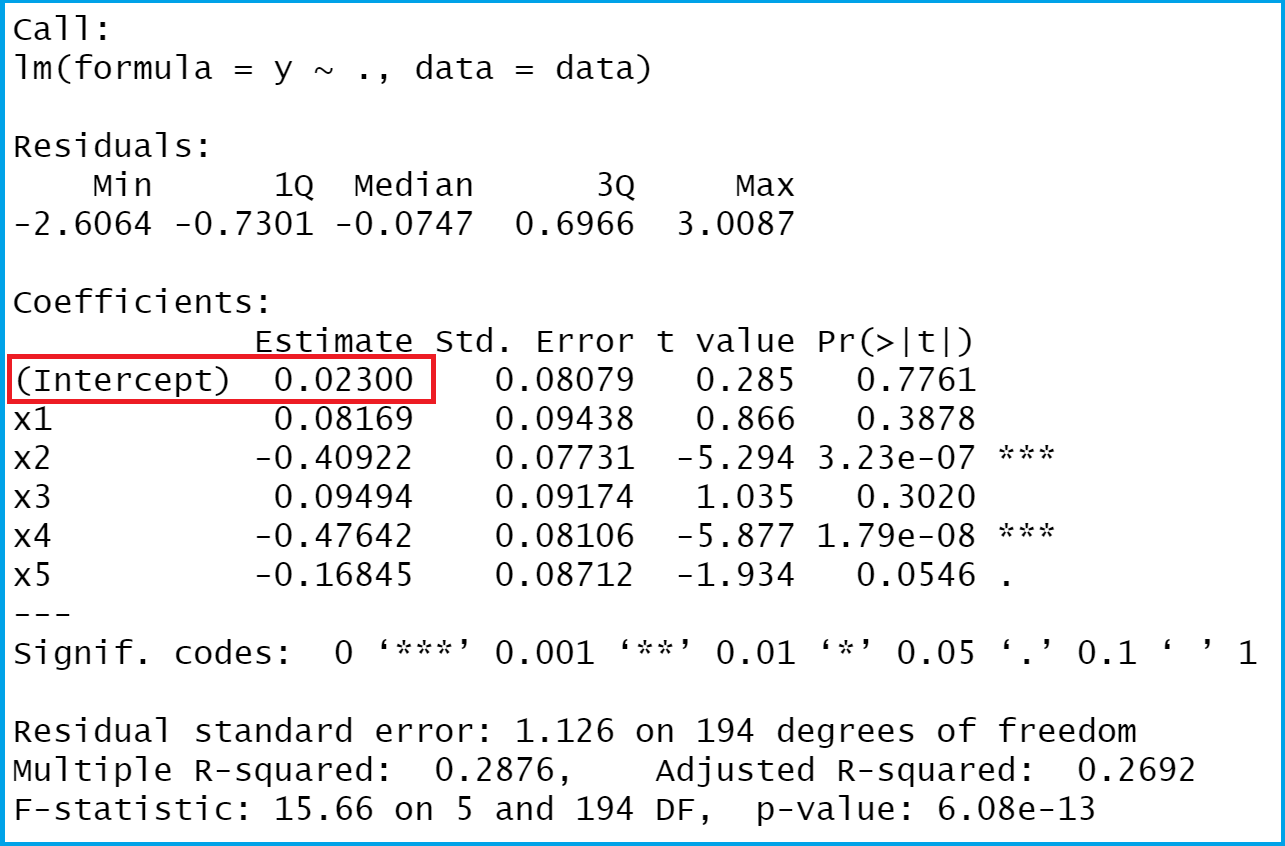

Then R will show you four diagnostic plots one by one. It’s very easy to run: Just use plot() on an lm object after running an analysis. In this post, I’ll walk you through built-in diagnostic plots for linear regression analysis in R (there are many other ways to explore data and diagnose linear models other than the built-in base R function though!). Using this information, not only could you check if linear regression assumptions are met, but you could improve your model in an exploratory way. Residuals are leftover of the outcome variable after fitting a model (predictors) to data, and they could reveal patterns in the data unexplained by the fitted model. Residuals could show how poorly a model represents data. We pay great attention to regression results, such as slope coefficients, p-values, or R 2, which tells us how much outcome variance a model explains. We can check if a model works well for the data in many different ways. After running a regression analysis, you should check if the model works well for the data. You might think that you’re done with analysis. However, we can create a quick function that will pull the data out of a linear regression, and return important values (R-squares, slope, intercept and P value) at the top of a nice ggplot graph with the regression line.You ran a linear regression analysis and the stats software spit out a bunch of numbers. Ggplot(iris, aes(x = Petal.Width, y = Sepal.Length)) + This can be plotted in ggplot2 using stat_smooth(method = "lm"): Plot(Sepal.Length ~ Petal.Width, data = iris) # Multiple R-squared: 0.669, Adjusted R-squared: 0.667 # Residual standard error: 0.478 on 148 degrees of freedom Normally we would quickly plot the data in R base graphics: fit1 |t|) # Sepal.Length Sepal.Width Petal.Length Petal.Width SpeciesĬreate fit1, a linear regression of Sepal.Length and Petal.Width. Let's try it out using the iris dataset in R: data(iris) Here is a quick and dirty solution with ggplot2 to create the following plot: Sometimes it's nice to quickly visualise the data that went into a simple linear regression, especially when you are performing lots of tests at once.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed